Since December, I’m working with one of my newest clients, Alexis Jae, on their jewelry website. The website is brand new, which means we are starting SEO from scratch. One way to drive traffic to the site is from blogs, so I’ve been working with my content team on writing new content. However, Google would not crawl and index the blog content, which was frustrating since the Google Search Console submit URL feature is not available. Since it took about a week or so after uploading the blog posts, I decided to investigate the issue. Here were the steps I took to make sure Google would crawl and index the blogs.

Check the Robots.txt Tag

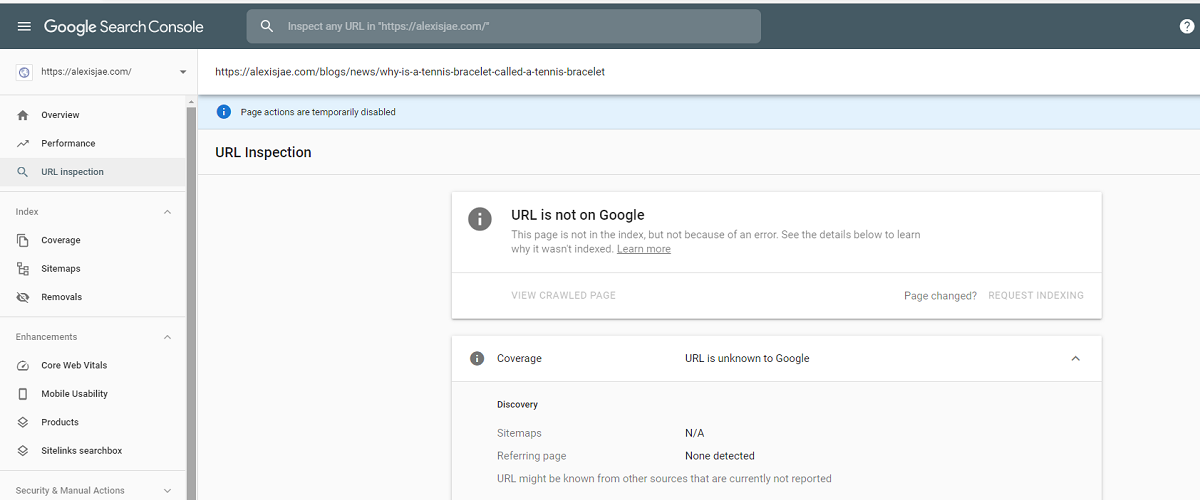

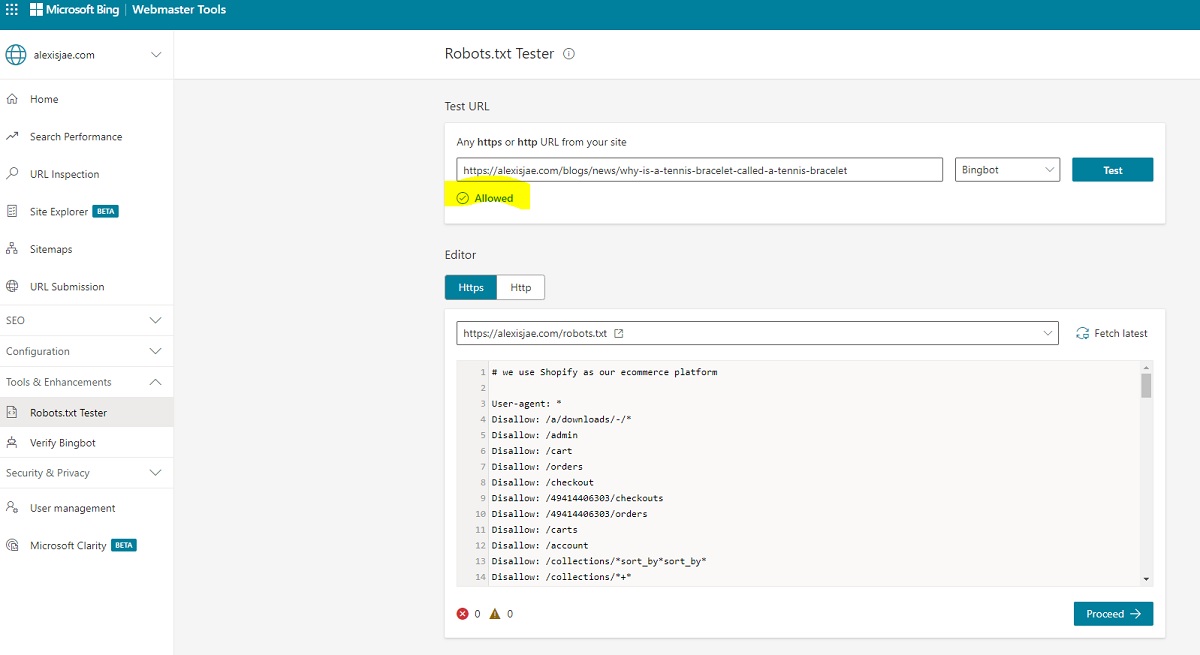

Google Search Console and Bing Webmaster Tools have a feature where you can test any URL in the robots.txt section. This tool’s idea is that Google and Bing will tell you if there’s a rule restricting them from crawling a specific URL. With the website being new, I was sure that the robots.txt file was not the issue. After testing the blogs in both tools, I confirmed that the robots.txt was not the issue.

Check for NOINDEX Tags

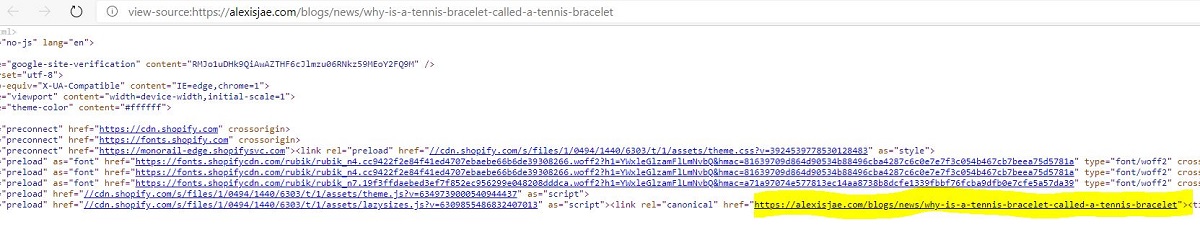

Content that is not in Google or Bing could be a result of a NOINDEX tag. A NOINDEX tag tells Google and Bing to remove this piece of content from their SERP when they crawl the content. When looking in the source code, I did not see that tag, which meant that was not the issue.

Check the Canonical Tag

Knowing that the non-indexing was not from the robots.txt and meta robots tag, I looked at the canonical tag next. Sometimes I’ve seen Shopify incorrectly give the wrong canonical tag on a page, which leads to indexing issues. After checking the Canonical Tag, I was able to confirm that there was no issue with that.

Check One Level Up

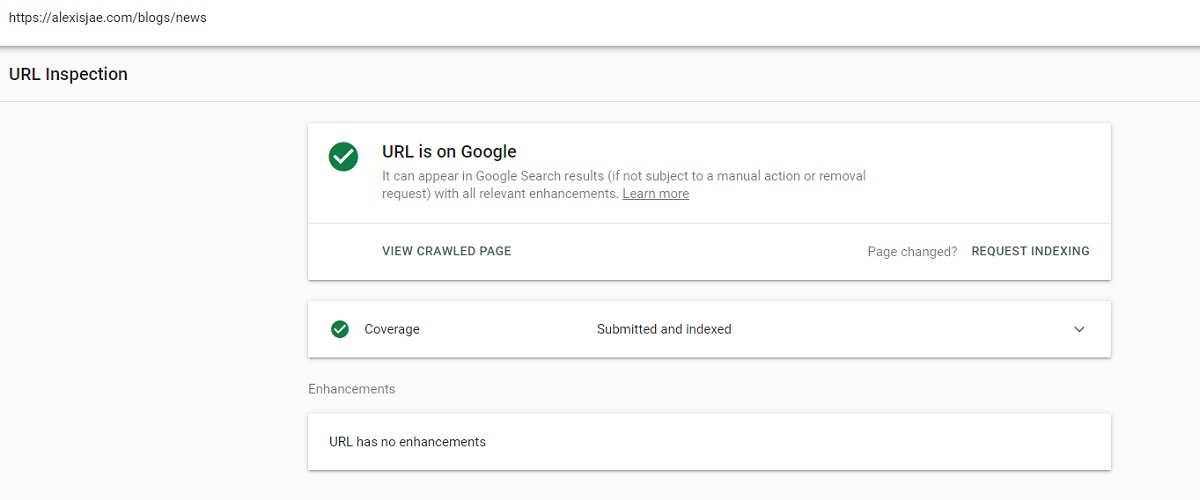

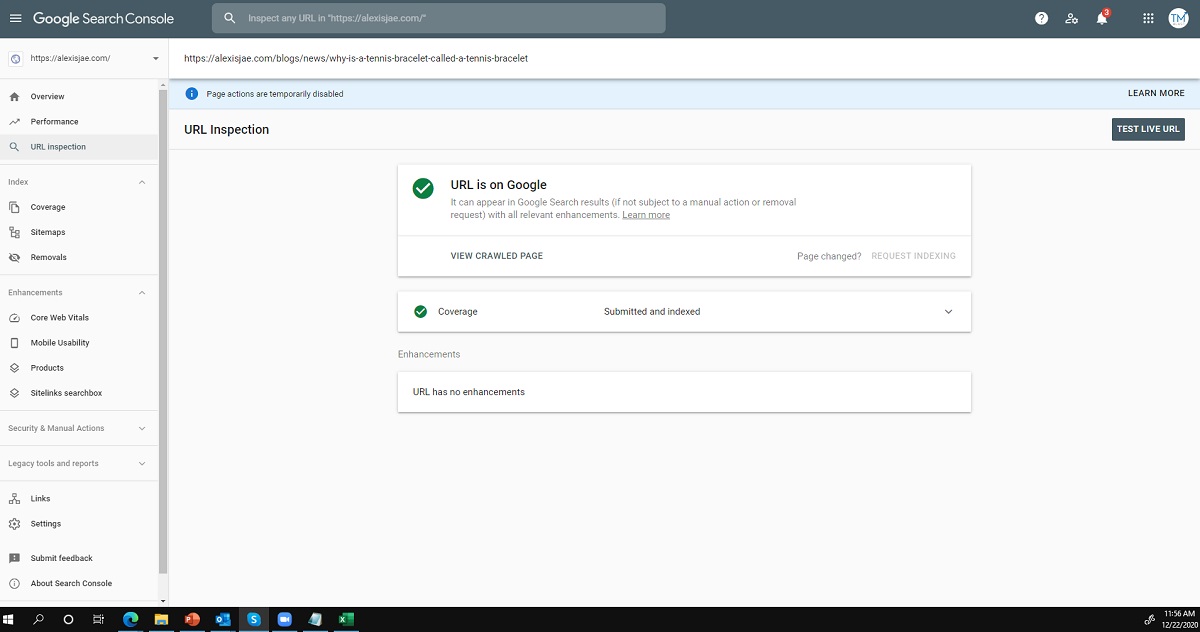

My client has a blog homepage that houses the two blog posts. Perhaps the error came from the blog post having the issues above, so I checked that as well. It turns out that there was no issue with that page. To confirm that the blog was in Google’s index, I went to Google Search Console to validate if it was in Google, which it was.

Add Two Direct Links to the Footer + XML Sitemap Submission

Now that I could eliminate these possible errors on the site, I gave the search engines a direct path to crawl and index the content. One strategy I’ve done with clients is to add direct links in the Footer to the blog posts. The idea behind this strategy is that it forces Google and Bing to look at the entire page for links to crawl, which means they would find the blogs.

I also resubmitted the XML sitemap in Google Search Console and Bing Webmaster Tools as a safety net with the footer links added. Since I don’t perform log file analysis for this client, I was unsure how often crawling took place on the XML sitemap file. Resubmitting the sitemap is quick and easy in Google and Bing, but the footer links were the primary strategy to fix this problem.

Wait a Day and Confirm

After adding the footer links, I went into Google and Bing to confirm if the blogs were in the index. It turns out that adding those footer links got the content in the index, which means future SEO traffic. With this new site, I know that it will take time to get to page one of Google for the target keywords. However, getting content in the index is the first step in the ranking.

Tracking the Results

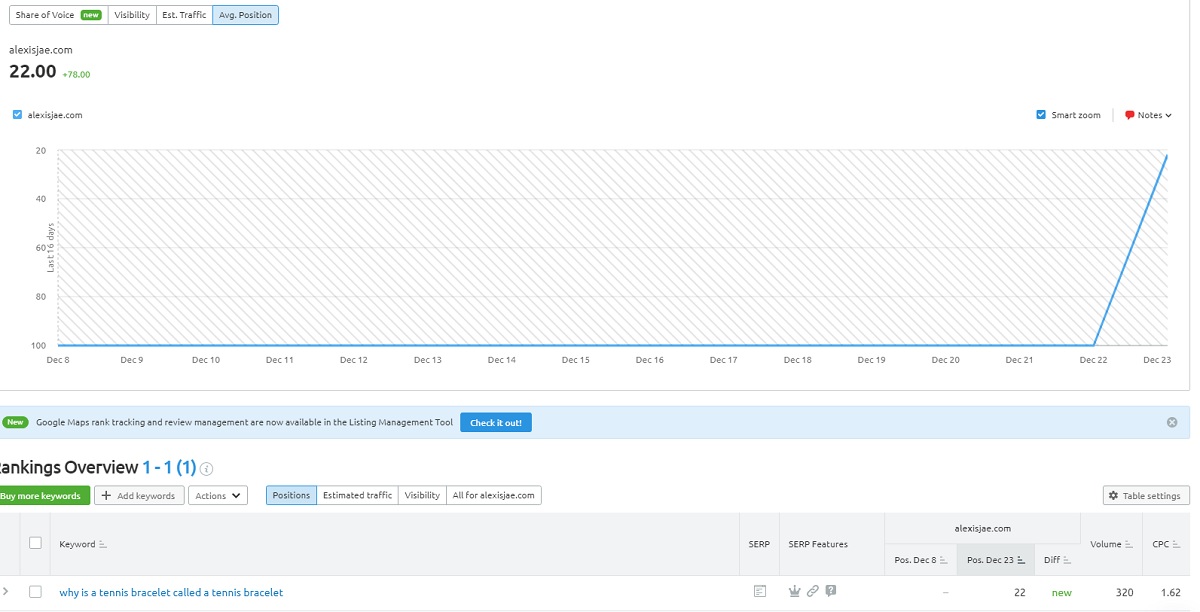

I use SEMRush to track my clients’ keyword rankings daily. Here is an example of a keyword never ranking for their site, but is now on page 3. I expect to get to page one of Google in a month or so, but this is a win.

If you would like to track your keywords for free, you can use my 14-day free trial to SEMRush!

Conclusion

This same issue also took place on Red Truck Jerky, who also uses Shopify. If you are using Shopify, you should follow these steps just in case.

TM Blast is a New York SEO company providing local SEO and National SEO clients around the United States. In addition to SEO, I also offer the Free SEO Workshop to learn SEO for free. Also, I provide Free SEO Analysis for any website owner’s site!

Greg Kristan, owner of TM Blast, LLC and The Stadium Reviews, LLC, has over 10+ years of SEO experience. He was also the SEO Manager at edX and was a contractor for Microsoft Bing Ads. Today, he works on optimizing local, national, and international company websites to rank higher in search engines through SEO. Finally, Greg has been featured on podcasts about his search experience and regularly updates his YouTube channel sharing digital marketing tips. Do you want to reach out to me about SEO help? If so, email me at greg@tmblast.com or call 877-425-2141